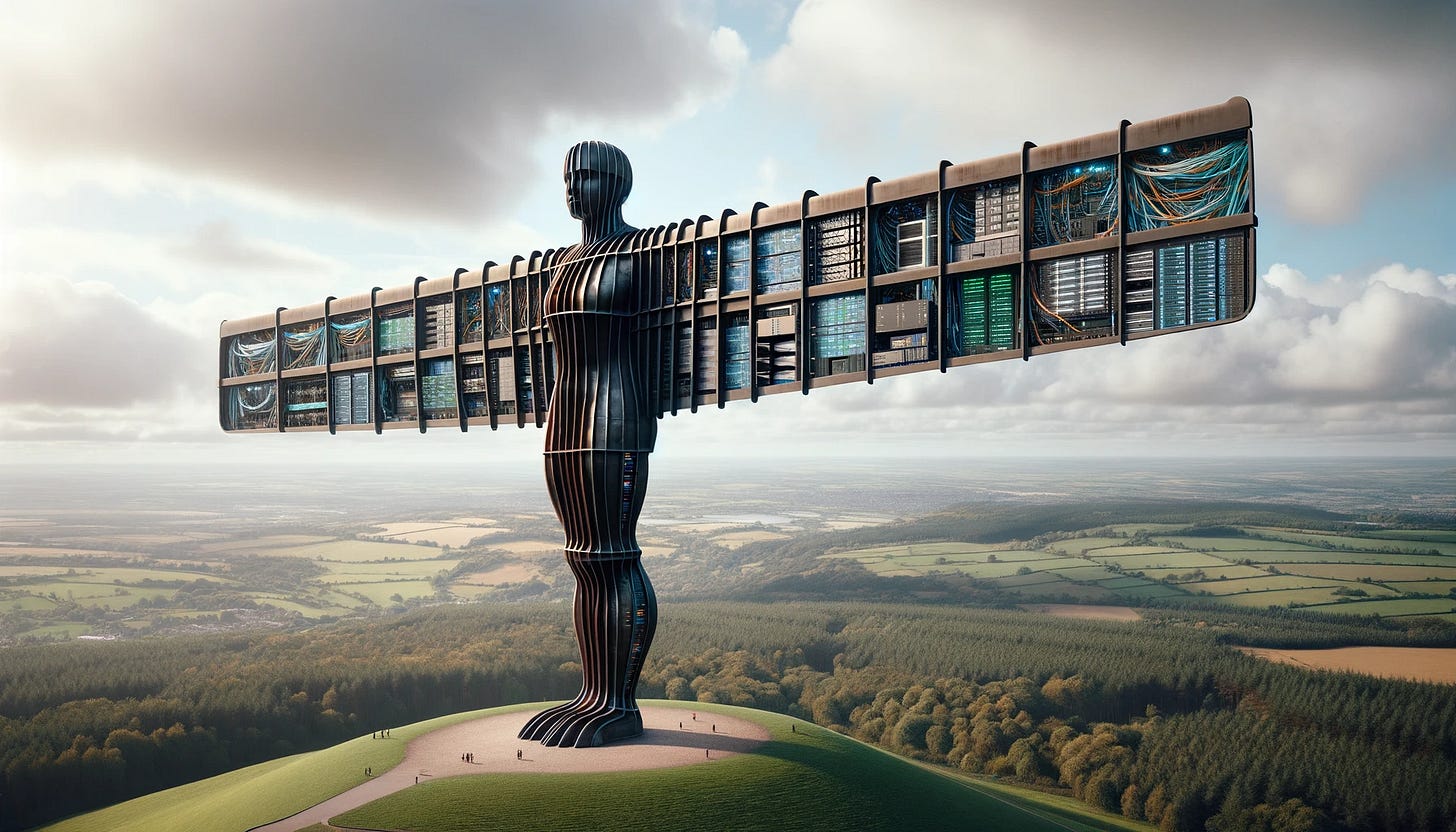

“The Angel of the North as a Data Center” - GPT4

*Firstly, TxP, a tech and policy community I co-organise, is running a £5,000 blog prize for answers on how to fix Britain. We are collaborating with The New Statesman Spotlight, Civic Future, and a killer judging panel to deliver some awesome cash and non-cash prizes. I would strongly encourage you to enter for the chance to more than recuperate your holiday expenses*

If you ever, like myself, trawl the comments section of a YouTube video or news article on AI, you are likely to find a certain phrase uttered: “Garbage in, Garbage Out”.

This has become a well memed out concept that describes poor quality data leading to poor technological outputs (such as via bias or inaccuracy). And although a pithy observation, public understanding of the underpinnings of AI are often reduced to this glib revelation.

Data was listed by leading AI labs as one of the three main drivers of AI progress, alongside talent. But another one is access to computing resources. The importance of compute to labs and industry has been well known for a few years now, but politics has not kept up.

Together, the scaling of compute and data have helped bring about the ChatGPT revolution, with state of the art model processing power doubling every six to nine months.

Whether it is new driverless car systems, advances in weather forecasting, or new disease monitoring tools, our ability to solve complex industrial tasks has been catalysed by not just better data, not just better training, but a transformation in our computing capabilities.

But compute is not cheap. The H100, the current leading AI chip, costs around $30,000, posing challenges for startups and academia to get ahold of these resources. The concentration across various parts of the compute supply chain (NVIDIA dominates chip design, ASML dominates semiconductor manufacturing, TSMC on fabrication, with AWS, Microsoft and Google running the cloud market) also presents an economic and geopolitical challenge around who has access to what resources in order to build the future.

Who has access to what is partly an empirical question. And unfortunately, we have very little understanding of this. Countries can be secretive about leading chip shipments. The UK is the only country in the world to do a comprehensive review of their compute capacity. We cannot govern what we do not know. Compute is no different.

That’s why I’m really excited to launch, through the Tony Blair Institute, the world’s first global review of national compute capacity. Partnering with Omdia, we delivered a report with a measurement framework and data explorer tool, titled ‘The State of Compute Access’.

We found that a stark digital divide is forming:

Meta and Microsoft have ordered 150,000 H100 GPUs this year, 30x more than is estimated for the UK’s National AI Research Resource.

23% of the countries in the Index have local access to cloud services optimised for ML through AWS, Google and Microsoft. 47% don’t have any local access deals in place at all.

50% of countries had not invested in any quantum infrastructure, and only 35% of countries had academic agreements in place to access quantum infrastructure.

We propose a radical shift in policy, with international development organisations in particular needing to catch up (see more recommendations in the report). Moreover, the UK has demonstrated leadership on building AI and compute governance capacity at home. It should now export that to the world.

Governments need to make scaling access to compute a strategic imperative for the coming decade. If they don’t other governments will, leaving the non-believers behind. In the meantime, I hope the new phrase issued in an AI article comments section near you will no longer be ‘Garbage in, Garbage Out’, and will instead be ‘Shoddy Servers, Shoddy Service’.

Entertainingly written and on the money as always!