Jumpers for Goalposts

The latest version of MidJourney captures many of the current issues in the AI capability discourse

*Firstly, TxP, a tech and policy community I co-organise, is running a £5,000 blog prize for answers on how to fix Britain. The deadline is January 7th, setting the ultimate holiday task. I would strongly encourage you to enter for the chance to more than recuperate your holiday expenses*

If you are actually spending Christmas with your loved ones, you may not have noticed that the latest version of MidJourney just dropped. The sixth version of the ultimate AI image generation has sent much of Twitter purring, with some Twitter threads making version 5, a mere 9 months older, look the far inferior sibling.

But what does this continued progress really mean for the creative process? The latest quality jump tells us a few things, and doesn’t tell us others.

Earlier this year, these were the sorts of comments leveled at AI image generation tools:

“AI inaccurately presents real-world objects.” (e.g., producing images of cars that don’t have doors)

Cast your eyes over numerous outputs from MidJourney V6, and you will find many of these issues beginning to be pretty well addressed. Words on signs are actually starting to make sense. Although properties of some objects can still be a bit strange, there has been significant improvement in this domain. And AI fingers being messed up? Finding old tweets to showcase those deficiencies is like digging up a time capsule.

There are still significant issues that remain with the output of these tools (such as default stereotypes, copyright jailbreaking, being trained on child abuse images, consistency of output, etc).

While these issues need serious commitment to addressing, it is remarkable how many of the technical output comments that were so popular months ago (e.g. finger-gate) have been rendered irrelevant.

This is going to happen again and again. Statements will be made to suggest that AI applications are not actually very good. A few months will pass with new updates to said applications. Those statements will no longer be true, and will be replaced by new ones that simply up the criteria for success. The snarks will remain snarking. The evangelists evangelizing. All the while, those that begin to apply these tools appropriately will actually make stuff happen.

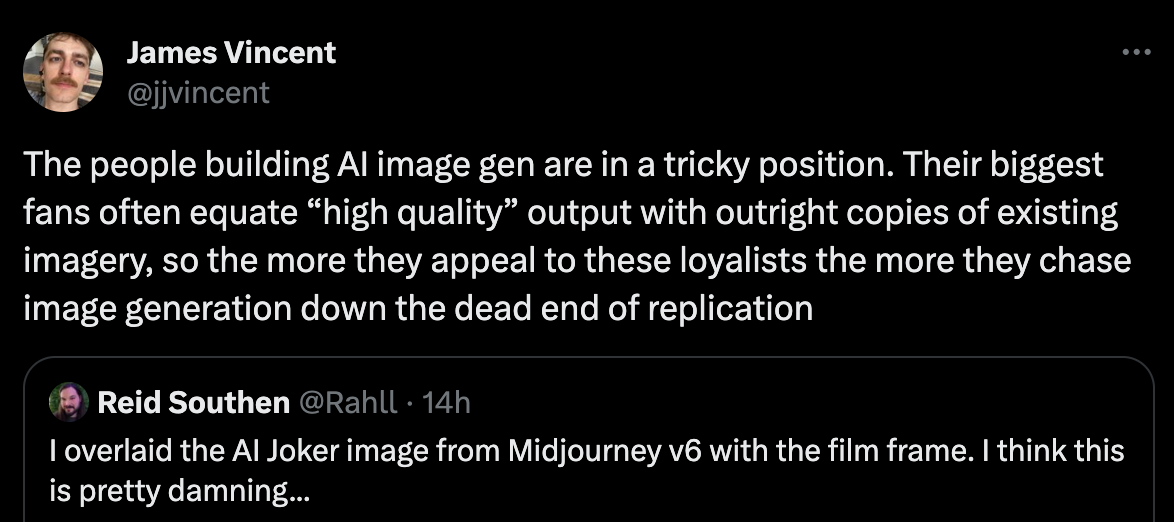

Now, the latest charge placed against AI image generation is no longer one of quality, but originality.

Part of the technical explanation for these views stems from a term called data leakage.

All substance, no style

Data leakage refers to a problem in machine learning where information from outside the training dataset is used to create the model. This can occur when data that would not be available at the time of prediction is inadvertently included in the training process. Data leakage can lead to overly optimistic performance estimates during training and validation but poor performance when the model is used in real-world situations.

With MidJourney now churning out hyper-realistic versions of Thanos, the Joker, and many other poster boys of the redpilled generation, it is highly likely that images that, for legal reasons, arguably shouldn’t be in training sets, are in fact.

ChatGPT faces a similar issue when resting on standardized test scores as the hallmark for its quality. It is highly likely that these systems were trained on the very tests that they were evaluated on.

Furthermore, the issues over IP infringement still abound. As Gary Marcus said, ‘“Data leakage” is often just another word for “theft.”’

So, will the realm of AI image generation just be cool remakes that end up getting taken to court?

There was a hubbub around controllist AI art a few months ago. This was art designed to integrate words, shapes, or symbols within an image in a way that substantially changes the overall artistic artifact. The likes of Harry Law outline that while more unique, this may not constitute a new genre of art altogether.

This quest for originality is not merely an art-based critique of AI, with many leading figures viewing novel discovery as the next big breakthrough that the AI ecosystem is waiting for. Recent DeepMind breakthroughs in mathematics have been met with optimism by leading scholars, but to many do not constitute anything game-changing from a problem-solving capability.

Given that Epoch research suggests that we are running out of real-world data, and there is growing evidence to suggest that synthetic data has significant limitations, the skeptics may end up being proved right.

Throwing more data and more compute at these tools will eventually hit a wall. More and more startups will need to emerge with the specific mission of resolving the remaining problems in these generative systems. But fixing fingers is one thing. Spotting something in quantum mechanics that the scientific community hasn’t is another.

That all being said, one year ago, AI image tools were making pizzas that looked like my insides. Now the emerging criticism of these tools is that they can make hyper-realistic representations, but not offer anything original. So, how long until the common criticism is ‘Yes, AI can make Nobel Prize-winning discoveries, but can AI make Nobel Prize-winning discoveries on a Nintendo64 with no Wi-Fi connection?’

*P.S. TxP, the tech and policy community I help to run, is looking to run an event on AI and creativity in the new year. If you have ideas for cool exhibition locations or speakers hit me up*

Of the Week - My Favourites

I continue to hold the view that the British media is one of the big structural barriers to effective policy and politics in the UK. So this podcast episode is catnip to me. Sarkar and Dunt agree on a worryng amount of things here, which makes me wonder if everyone technically ‘agrees’, why isn’t anything done about it?

Youtube Video: Geowizard - The adventure blossoms and intensifies [Tenner in my pocket #2]

In this video, a Brummie just walks through the countryside for a few days with only a tenner, the gorgeous countryside, and the good faith of locals to get him further North. For a video so simple, it is weirdly so elegant.

Article: Towards Responsible Governance of Biological Design Tools - Moulange et al

A lot of great NeurIPS output over the last week, but this one in particular jumped out to be. The convergence of AI and biology is going to be one of the drivers of this century, and the authors propose a list of measures that could be taken to reduce the risk of highly capable BDTs.